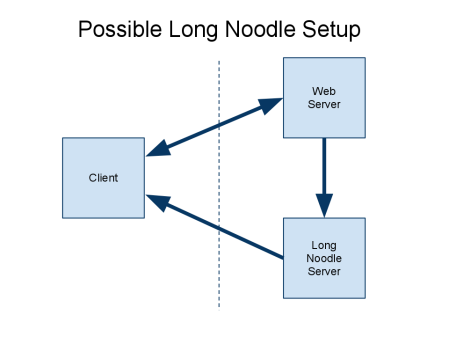

2 weeks ago our marketing site experienced a Distributed Denial of Service attack. It happened once during the day and then again that night. IP addresses looked like eastern European. Thankfully our marketing site is on Heroku so we simply upped the resources to handle all the requests. Why would someone want to attack or hack our marketing site? They may have been trying to take down our ad server or perhaps send erroneous contact requests through our contact form. Our marketing site was much more vulnerable than our ad server.

Ad serving is a lot like handling DDoS attacks.

During the attack we received an email from Neustar who noticed we were down and offered their DDoS mitigation services. This seemed very suspicious at first. How did they know? Were they the attacker? I spoke with them later and they monitor bot traffic to help companies. The representative said they do not pay outside parties for attack data which I was happy to hear since that could encourage more attacks. We decided not to sign up with Neustar and have installed more protections into our marketing site.

The same week the lock on the front door of my building broke and I couldn’t get in. There happened to be a business card for a locksmith right outside so I was all set.

Posted by Chris Hobbs

Posted by Chris Hobbs